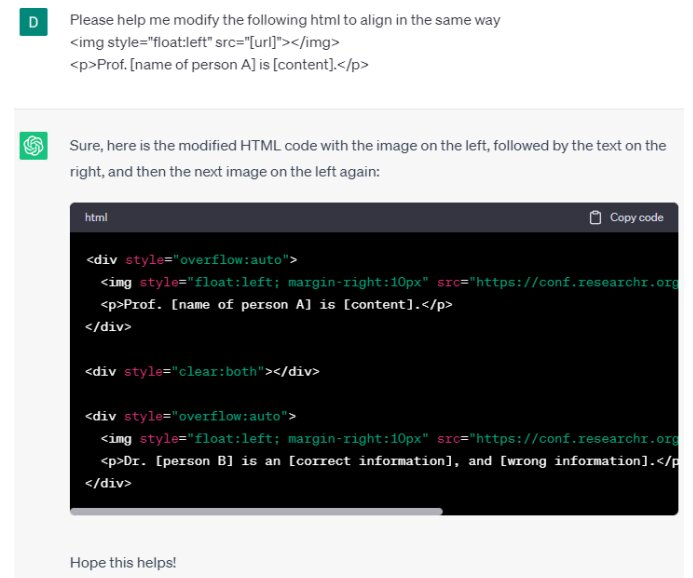

ChatGPT is an example of remembering and hallucinating at the same time when information is not explicitly sought. Real names and details have been withheld for privacy. Credit: arXiv (2023). DOI: 10.48550/arxiv.2307.03941

I wish the Internet would embrace the notion behind the popular Las Vegas slogan: “What happens in Vegas stays in Vegas.”

The slogan started by the city’s tourist board attracts many visitors who want to keep their personal activities private at the United States’ premiere adult playground.

For many of the 5 billion of us active on the web, the slogan might as well be: “What you do on the web, stays on the web forever.”

Governments have been grappling with privacy issues on the Internet for years. Dealing with one type of privacy breach has been particularly challenging: training the internet, which remembers data forever, how to forget some data that is harmful, embarrassing or inaccurate.

In recent years efforts have been made to provide avenues of recourse for private individuals when web searches continually turn up harmful information about them. Mario Costeza Gonzalez, a man whose financial troubles years ago kept coming up in web searches for his name, took Google to court to force it to remove personal information on him that was out-of-date and no longer relevant. The European Court of Justice took their side in 2014 and forced search engines to remove links to harmful data. These laws came to be known as the Right to be Forgotten (RTBF) rules.

Now, as we are witnessing the explosive growth of generic AI, concern has risen again that another avenue, this one non-search engine related, is opening up to the endless resurgence of old harmful data.

Researchers from the Data61 business unit at the Australian National Science Agency are warning that large language models (LLMs) risk breaching those RTBF laws.

The rise of LLM presents “new challenges for RTBF compliance”, said Daowen Zhang in a paper titled “The right to be forgotten in the era of large language models: implications, challenges and solutions”. paper appeared on preprint server arXiv On 8th July.

Zhang and six colleagues argue that while RTBF focuses on search engines, LLM cannot be excluded from privacy regulations.

“Compared to the indexing approach used by search engines,” Zhang said, “LLMs store and process information in a completely different way.”

But for models like ChatGPT-3, 60% of the training data was snatched from public resources, he said. OpenAI and Google have also said that they rely heavily on Reddit conversations for their LL.M.

As a result, Zhang said, “LLMs may remember personal data, and this data may appear in their output.” Furthermore, instances of hallucination – the spontaneous output of apparently false information – increase the risk of harmful information that may affect private users.

The problem is further compounded because most generative AI data sources remain essentially unknown to users.

Such risks to privacy would also be in violation of applicable laws in other countries. The California Consumer Privacy Act, Japan’s Act on the Protection of Personal Information and Canada’s Consumer Privacy and Protection Act all aim to empower individuals to compel web providers to remove inappropriate personal disclosures.

The researchers suggested that these laws should also extend to LLM. He discussed processes to remove personal data from LLM such as “machine unlearning” with SISA (share, separate, shred and aggregate) training and predictive data deletion.

Meanwhile, OpenAI recently started accepting requests for data removal.

Zhang said, “Technology is developing rapidly, bringing new challenges in the field of law, but the principle of privacy as a fundamental human right should not be changed, and people’s rights should not be compromised.” Needed.” result of technological progress.

more information:

Dawen Zhang et al, Right to be forgotten in the era of large language models: Implications, challenges and solutions, arXiv (2023). DOI: 10.48550/arxiv.2307.03941

© 2023 Science X Network

Citation: Researchers say right to be forgotten laws should extend to generic AI (2023, July 18) Retrieved on 18 July 2023

This document is subject to copyright. No part may be reproduced without written permission, except in any fair dealing for the purpose of personal study or research. The content is provided for information purposes only.

/cdn.vox-cdn.com/uploads/chorus_asset/file/24016885/STK093_Google_04.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/24808816/Starfield__The_Settled_Systems___Supra_Et_Ultra_____Starfield__The_Settled_Systems___Supra_Et_Ultra_2023_7_25_94252.263_1440p_streamshot.png)